General concerns

Any system nowadays holds a lot of dependencies and integrations to other systems. Knowing a bit of basic network knowledge is essential in being able to perform a technical tester task.

The last few decades the network performance improvement has been far greater than the rest of the computer industry (the rest of computerology more or less following Moore's law). During the 90:s and early third millennia engineers had to resort to client/service based rich clients to get usable systems. Calculations on the mainframe computers was too expensive for excessive use, and networks was too limited for centralized architectures that utilizes networks a lot. Hence the client/server model arose. This uses a result sets of relevant data that was sent from server to the client for manipulation client side and then sent back for storage.

When the networks improved many of the client/server systems were still in use (many of them still are), but the problem of keeping all clients updated to the same version was a problem. This made mechanisms like Java WebStart and similar technologies to make sure all clients have the same software version a thing.

Today the organizational landscape is diverse. The networks are now good enough for the old dream of cloud computing - not having to care about where your server is at, making way for super cost-efficient server operation. There are still a lot of client/server systems out there, and they are still widely used. Most systems nowadays are centralized in the sense that they always read the whole application code needed from a centralized source (cache excluded). Web based systems are the norm nowadays.

The more the systems rely on networks to use, the more relevant it the network knowledge become.

The OSI model

The seven layers of the OSI model has persisted for half a century or so. Sometimes you'll hear stuff like "Layer 5 switch", and it relates to the OSI model. It's a model of the different protocols and components involved in transmitting data from one system to another and is highly relevant for troubleshooting.

| OSI model layer | Description | Examples |

|---|---|---|

| Layer 7 - Application layer | Using the data | Spotify, Web browser |

| Layer 6 - Presentation layer | Manages data streams and make it available for applications | HTTP Client |

| Layer 5 - Session layer | Manages sessions | HTTP |

| Layer 4 - Transport layer | Collision management, resending, assembles parts of responses to full responses. | TCP, UDP |

| Layer 3 - Network layer | Routes traffic to correct host | IP, ICMP, ARP |

| Layer 2 - Data link layer | Transfers data between adjacent networks (router jumps) | PPP (Point-to-Point Protocol) |

| Layer 1 - Physical layer | Cables and NIC (Network Interface Cards). Sometimes include NIC drivers. | TP cable |

TCP/IP

TCP/IP is the basis of modern corporate networks as well as the Internet. It's a stack of protocols that to some extent matches the OSI model.

TCP/IP handles anything from addressing of computers, transmission mechanics, and delivery confirmation. It's also extendable for encrypted data transfer through SSL (Secure Socket Layer) and much more wich makes it extremely versatile.

TCP vs UDP

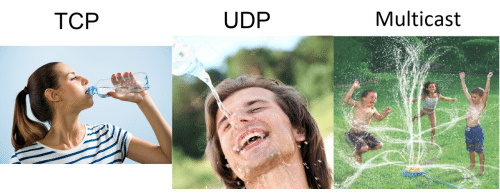

The most common protocol at this level is the TCP protocol. This protocol makes sure the full request is received and requests re-sending and more. This might build roundtrip times on poor networks.

Sometimes it's more important with an even flow of data than that every bit is received correctly. It might for example be streaming video and audio data, or sensor data. For that purpose, the UDP works better. It leaves it up to higher levels to make the most of the data it receives but does not guarantee that all data is received.

IP

The IP protocol manages addresses and information about routing between gateways between networks. The IPv4 is still the most used one even if IPv6 has been out for a long time.

The IPv6 was a promising piece of technology, with built in network prioritizaion, a network address space wide enough for all hosts to have unique addresses, built in routing help and some security features.

However, it has had a lot of security issues, and poorly implemented IPv6 stacks has given the protocol a bit of a bad name.

Since IPv4 is still the most widely used IP protocol, this section will deal with techniques and technologies that is good to know about the IPv4.

Reserved addresses and ranges

Some IPv4 addresses carry extra information that tell you something more about them:

To understand address spaces the subnet mask is relevant. You might have seen numbers like:

| 255.255.255.0 | C net. Maximum of 255 hosts on the network. |

| 255.255.0.0 | B net. Holds quite a lot of computers. |

| 255.0.0.0 | A net. Can hold a huge number of computers. |

Reserved IPv4 addresses and ranges

| Address | Use |

|---|---|

| 127.0.0.1 | Loopback interface. This comes back to your own computer network interface. |

| Addresses starting with 10 | (E.g. 10.0.12.123) These are a full A sub-net of reserved internal addresses that cannot be exposed directly on the Internet. A lot of organizations use this for their internal network space but uses a smaller subnet mask for efficient routing. |

| Addresses starting with 192.168 | These are a smaller range of IP addresses that are reserved from direct exposure on the Internet. |

| Addresses ending with 1 | By convention these are generally the router. You may confirm this with the ipconfig or ifconfig command |

| Addresses ending with 0 | Broadcast addresses. Don't use them unless you know what you do. |

There are different types of broadcasts. Both multi-cast, to a number of targeted tiers, and full broadcast (to all hosts on a network) is called broadcasts. Some uses of broadcasts are hosts broadcasting their names to routing components for use in Windows Redirector service and other.

DNS - Domain Name Service

The DNS is a very useful component. It's a network component that maps computer names to IP-addresses. This makes the Internet work better. Imagine having to memorize all numbers for any server you would want to browse to. The DNS makes sure that when you use a computer name you end up at the right server. Note that the DNS don't care about the full endpoint but only the IP address to computer name.

NAT - Network Address Translation

Since there are far more hosts than there are IPv4 addresses the use of NAT, Network Address Translation, is widespread.

Network address translation (NAT) is a method of remapping one IP address space into another by modifying network address information in the IP header of packets while they are in transit across a traffic routing device.[1] The technique was originally used as a shortcut to avoid the need to readdress every host when a network was moved. It has become a popular and essential tool in conserving global address space in the face of IPv4 address exhaustion. One Internet-routable IP address of a NAT gateway can be used for an entire private network.

Most likely your own home router is using NAT.

DHCP

One other mechanism to deal with the limited number of IPv4 addresses is re-using IP addresses that is not in use. A DHCP server hands out addresses for "lease" for a limited time and keep track of what addresses are available for hand-out.

To check your IP settings, use the command ipconfig /all on Windows, or ifconfig on Mac/Unix/Linux

Certificates and SSL

In its basic version no security is built into the TCP/IP stack. The IPv6 made an effort to include security, but was never fully successful in doing this. Instead everyone resorted to the basic mechanisms of IPv4 - using SSL (Secure Socket Layer).

SSL is used in any TCP/IP based secure version (HTTPS, FTPS, SMTPS - where the trailing S stands for Secure).

SSL is an encryption mechanism depending och certificates. A ritual of a series of handshake actions between the termination ends of a communication route establishes a secure line over wich data can be sent without being interpretable by anyone else.

Certificates has one public part and one private part. The public part is shared, and like a jigsaw puzzle it takes part in decrypting the data sent.

Never ever trust any homemade security mechanism. Any hacker will break this in seconds. Only well proven security standards should be trusted.

Tricks

The hosts file

One trick sometimes used for controlling test environments is tampering with the operating system hosts file.

This file resides in your computer and explicitly maps computer names to IP addresses. This could be very useful when you want to:

- Make sure no communication is reaching a certain host (e.g. setting mailserver.mycompany.com to localhost to prevent emails from being sent)

- Redirecting between test environments by re-assigning test.mysystem.mycompany.com to different IP addresses depending on what you want to run with

Cheat sheet

| Command | Use |

|---|---|

| tracert | Track router paths for communication |

| ping | Connect check and roundtrip time verification. Has more flags than most people know of. |

| ipconfig/ifconfig | Information about current IP settings on Windows ipconfig is used but on Mac/Linux/Unix the ifconfig command is used. |

| telnet | Program to connect to specific ports |

| nslookup |

HTTP(S) - HypertText Transfer Protocol

There are a lot of protocols in the TCP/IP stack. Currently the HTTP and its more secure variant HTTPS (using SSL) are so widely used that they will be granted a specific chapter in this tutorial.

As the name implies the original use of HTTP was to transfer HTML (HyperText Mark-up Language) content from servers to clients. HTML content is text-based and was relatively small.

For reference some of the other application level protocols beside HTTP are:

- FTP (File Transfer Protocol)

- SMTP (Simple Mail Transfer Protocol)

- POP (another email protocol)

- DNS (see above)

- MIME (Multipurpose Internet Mail Extensions)

HTTP client

The HTTP client is a tiny program that manages HTTP requests to a HTTP server and takes care of the response.

The client manages timeouts, sets cookies, forwards headers and more. Most HTTP clients manages the use of base address for relative URIs

A HTTP client generally can perform transactions (request + response) asynchronously as well as in a synchronous way, and a modern web browser basically is a HTTP client with a webkit (HTML rendering engine and a JavaScript engine) and a lot of error management for poorly created HTML/CSS content.

Most programming languages has libraries for HTTP client. The web browser that uses a JavaScript engine has a HTTP client that is in the browser, but also one in the JavaScript engine since xHTTP is implemented in JavaScript. This can be very useful for test automation purposes.

Endpoint

The endpoint is the URL to the server resource used. If the HTTP client holds a base address relative paths could be used. Note that the server might be on the same machine as the client. For example, a URL like 'file:///c/mydirectory/myfile.html' is totally valid.

URI vs URL

While they are used interchangeably, there are some subtle differences. For starters, URI stands for uniform resource identifier and URL stands for uniform resource locator.

Most of the confusion with these two is because they are related. You see, a URI can be a name, locator, or both for an online resource where a URL is just the locator. URLs are a subset of URIs. That means all URLs are URIs. It doesn't work the opposite way though.

Headers

There are a number of HTTP headers included in the HTTP standard. These are however not an exclusive list. Different applications may include header values of their own - and often do.

Different HTTP Clients treat headers differently. For simplicity most HTTP clients use one list of headers, but some separates use of content headers, response message headers, request message headers and so forth.

The headers carry meta information in the HTTP message; encoding, content-type, authorization token, and stuff like that. Generally, it's the Endpoint URL and the HTTP Body that holds the information used by the system.

For an extensive list of HTTP header fields, check out Wikipedia.

The HTTP verbs

The HTTP verbs are used to describe the intention of a transaction. It's up to the implementation to make sure to adhere to the verb. A programmer only tags a method with the verb, and copy-pasting exist, so verb behaviour are not necessarily always as expected.

The verbs sometimes are compared with the CRUD (Create, Read, Update, Delete) operations used in databases, and sometimes HTTP based REST services are treated like stored procedures in distributed databases. The verbs are more than the four CRUD operations:

| HTTP Verb | Description |

|---|---|

| GET | Read |

| POST | Create |

| DELETE | Remove |

| PUT | Update/Replace |

| PATCH | Partial Update/Modify |

As mentioned before, the web browser is basically a HTTP Client mainly used for HTTP GET commands to retrieve HTML content, JavaScripts and CSS:es.

The HTTP body

While the URL Endpoint is the address of a HTTP message, the verb describes the intention.

Cookies

Most HTTP clients support cookies. It's small files with data saved to the local computer. The cookie can have a validity scope regarding time duration, is associated with a certain site, has a path and some relatively short content string.

The same site then may check for content in its own cookies. Since this behaviour is easily overridden cookies are not deemed as a safe way of storing information.

One common use of cookies is to temporarily store authentication tokens during a session.

One alternative to using cookies is using the web browser local storage, if you are working with a browser-based system.

General tricks

SSH / RDP

Connection troubleshooting procedure

If you encounter connection issues, there is a pretty standardized way of identifying where the problem is at. It's based on the OSI model.

| Step# | Step |

|---|---|

| 1 | Open a terminal/command prompt and ping 127.0.0.1. This request will not leave your network interface card driver. |

| 2 | In the terminal, check your IP address (ipconfig/ifconfig) and try to ping this. If this works, your NIC (Network Interface Card) is working. |

| 3 | Ping the local gateway (generally the first three numbers of your own computer, but the last number is 1 |

| 4 | Ping the server you are trying to reach. This will display it's IP-address, and if connection can be established. |

| 5 | Run tracert to see the router path the communication takes. |

Authentication issues

There are a lot of ways to handle authentication. Some of these include:

- Basic authentication

- NTLM/Kerberos

- Password/username combinations

- BankId

- Hard tokens (e.g. for API access)

- Soft tokens (e.g. for use with mobile phones)

- Biometric authentication

NTLM authentication is the Windows logon mechanism, that also works with the LDAP to use with for example an AD. To alter this on runtime the programmatic approach of Impersonation need to be used.

Basic authentication has the advantage of being built into many applications. Any web browser or FTP client can use basic authentication by simply including the logon information in the URL, like this:

https://myusername:mypassword@www.mycompany.com/myresourcepath/myresource.html

In test automation this can come very handy.

Note the difference between authentication: Securing that a person is who he says he is, and Authorization; checking granted user rights for a person.

Sniffers

There are a lot of client-side network sniffers. One of the most widely used, and referenced, ones is the WireShark tool. There are a lot of alternatives to this, but it does the job nicely although it might require you to spend some time with the tutorials before you'll understand how to use it.

The OWASP security site also lists a lot of tools and techniques for networks.

AD - Active Directory and LDAP

The AD is a hierarchical tree of information about user accounts, user groups, computers, computer groups, servers, network components, applications and other things in the IT landscape. It's kind of a hierarchical CMCD, but with a possibility to manage granted user rights and perform lookups.

The most common protocol used to check for user rights is the LDAP (Lightweight Directory Access Protocol). The LDAP protocol is supported by most catalogue services, not only the Microsoft AD. This makes it versatile for multi-platform applications to use.

Often the AD is a proven and well working component, but if the tree depth of the AD gets very deep it's been known to cause performance issues.